Author: Georg Heiler

Editorial Contributions: Garima Gujral, Joaquin Melara

The Art of Doing Nothing:

Metaxy is shaped by a simple idea: in complex systems, progress often comes less from doing more work than from becoming precise enough to avoid the wrong work. That is the “art of doing nothing” in this context — not passivity, but disciplined restraint. In compute-heavy AI pipelines, where a small upstream change can trigger cascades of GPU jobs, API calls, and token spend, the highest-leverage improvement is often better judgment about what can safely remain untouched.

Newsletter Outline:

In this newsletter we are going to address all relevant topics for consideration around Metaxy’s development as an open-source project:

Managing heterogeneous data for training multimodal

The incident that exposed the real problem

The origins of Metaxy

Becoming precise enough to avoid the wrong work

The missing layer between orchestration and execution

Applications in research and production environments

3 lessons learned developing Metaxy

Improving culture and outcomes for engineers and researchers

Managing Heterogeneous Data for Training Multimodal

Metaxy began with a problem that appeared small but behaved like a tropical storm.

Metaxy was developed by Daniel Gafni https://gafni.dev/ (Anam https://anam.ai/ ), a company building real-time AI video avatars and the multimodal data systems behind them with a lot of support from Georg Heiler https://georgheiler.com/ . That production setting made the cost of unnecessary recomputation immediately visible: video, audio, transcripts, embeddings, and derived artifacts all move through expensive pipelines. Daniel Gafni (employed at Anam) is the main force behind Metaxy; Georg Heiler collaborated with Daniel on Metaxy independently and is not employed by Anam.

At Anam, the team works with multimodal training data: video, audio, transcripts, embeddings, and all the intermediate artifacts needed to build and improve interactive AI systems. But the underlying problem is much broader than one company or one domain. Any team working with compute-intensive pipelines runs into the same type of problem. A small upstream change can ripple outward into hours of additional processing, expensive hosted model calls, large token bills, or long queues on self-managed GPUs. "Just rerun it" sounds simple until it means reprocessing millions of records or paying again for work that has effectively already been done.

The Incident That Exposed the Real Problem

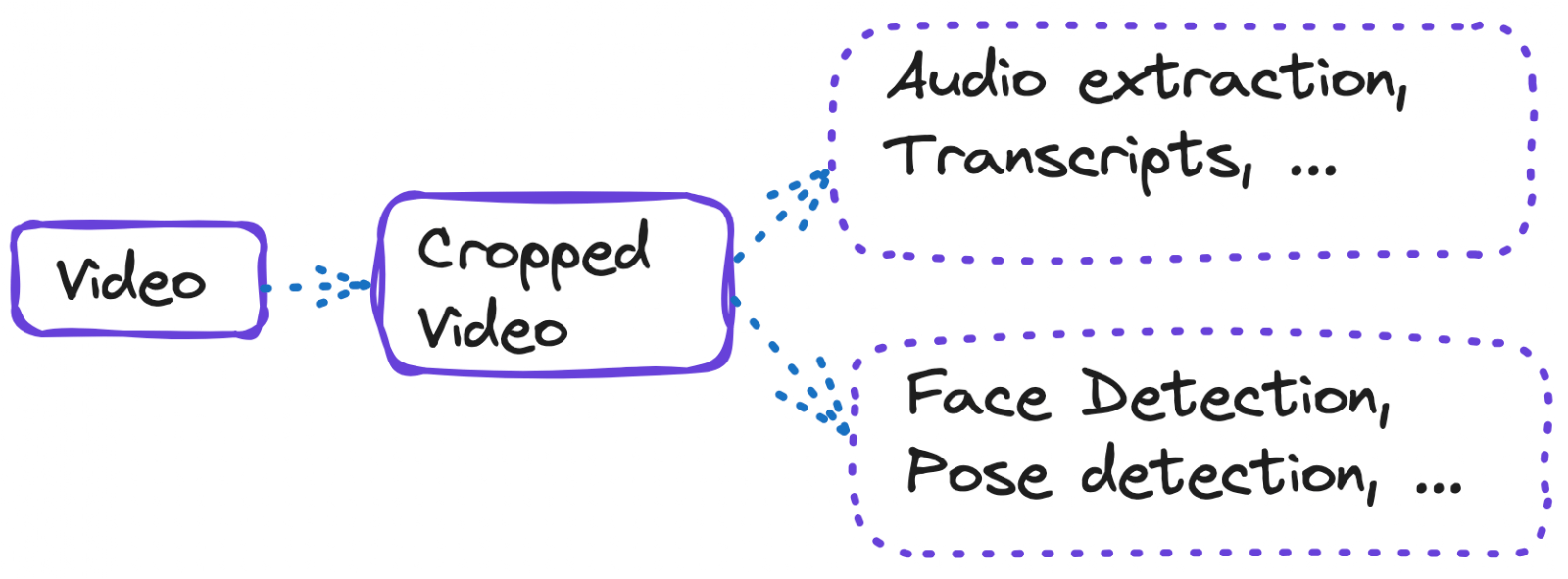

One incident made that painfully concrete. The team changed the crop resolution for video data. It should have been a limited update. But downstream, the pipeline had already split into multiple branches. Some steps truly depended on the video frames. Others only depended on audio. The system in place could not distinguish those cases precisely enough, so it treated far too much work as stale. Branches that should have been left untouched were sent back through costly processing anyway.

That experience exposed the real problem. The issue was not only orchestration, scheduling, or scale. It was granularity. The pipeline could tell that something had changed, but it could not tell precisely what had changed or which downstream work actually depended on it. That is just as true for document pipelines, retrieval systems, multimodal ETL, embedding generation, or LLM-based post-processing as it is for avatar or video workloads.

The Origins of Metaxy

That is where Metaxy starts: with a simple ambition to make pipelines smart enough to know what not to do. More specifically, Metaxy addresses a gap that many pipeline systems leave open. Traditional orchestration tools can usually detect that an upstream asset, file, or table has changed, but they often cannot determine with enough precision which exact downstream records or fields are truly affected by that change. As a result, systems fall back to blunt recomputation: rerunning whole branches, reprocessing unchanged samples, and repeating expensive GPU jobs, API calls, embeddings, or token-heavy LLM steps that did not actually need to run again.

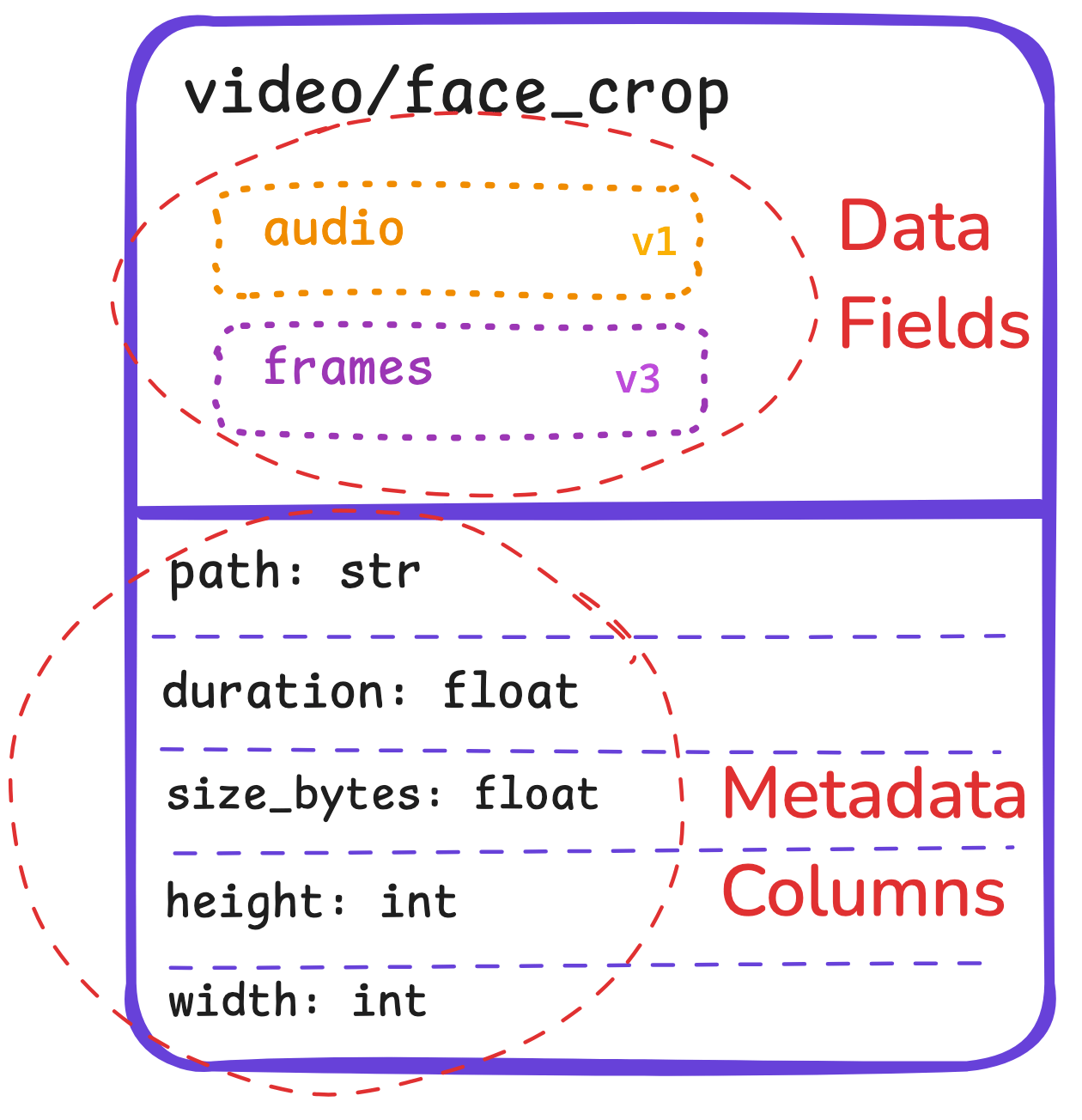

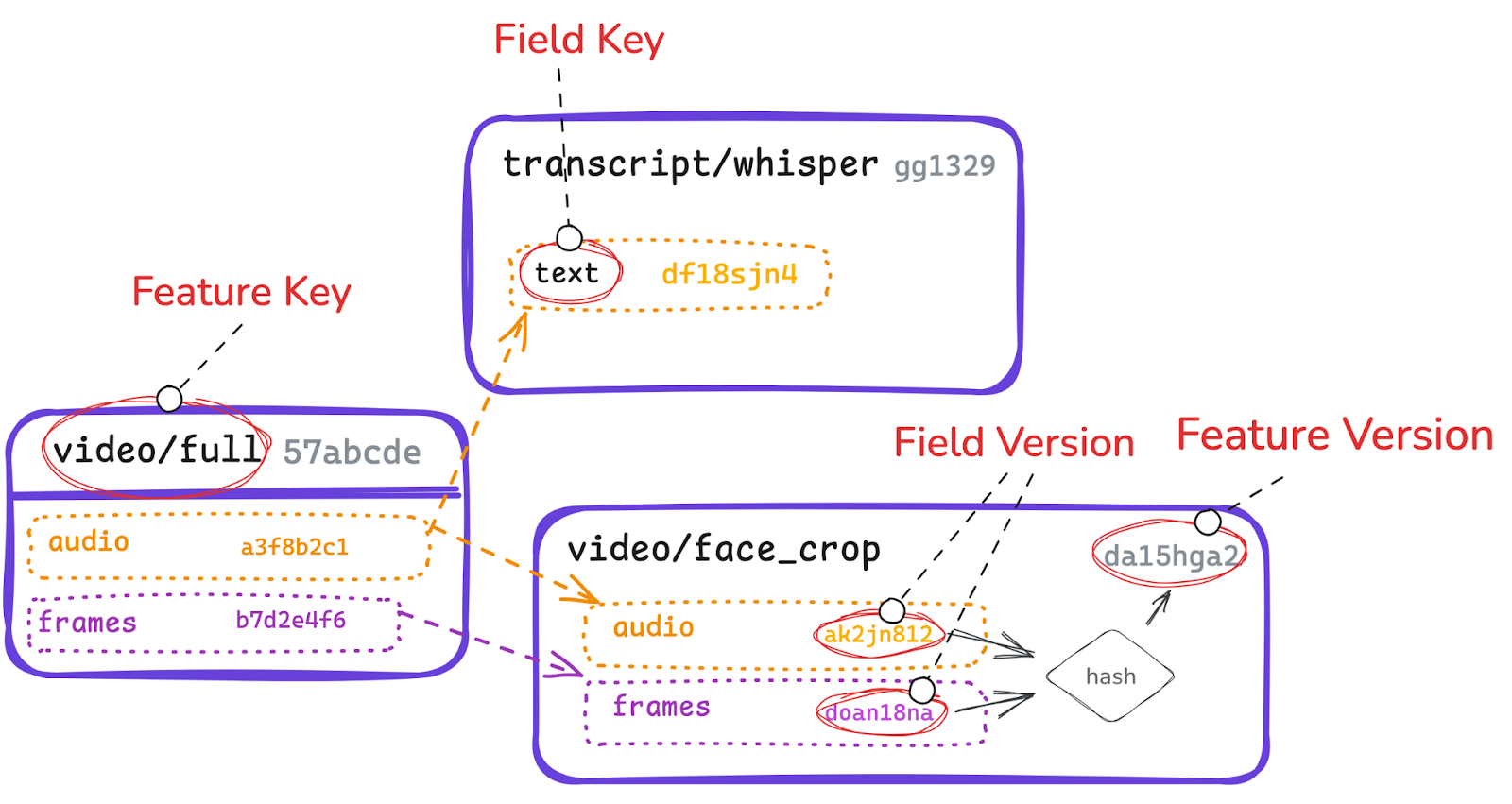

Metaxy closes that gap by acting as a metadata and decision layer for incremental work. It tracks dependencies at a finer level, so the system can distinguish between changes that matter to one branch and changes that do not. Instead of treating a multimodal sample as one indivisible object, Metaxy can reason about which downstream outputs depend on frames, which depend on audio, which depend on text, and which can safely remain untouched. In practice, that means fewer false recomputations, lower costs, faster iteration, and much clearer confidence about what changed, why it changed, and what work really needs to happen next.

Becoming Precise Enough to Avoid the Wrong Work

Most data systems make decisions at the level of files, tables, or assets. For many workflows, that is good enough. But multimodal ML pipelines are different. A single record can carry several separate paths of meaning at once: video frames, audio, text in a document (ocr, rag), embeddings, metadata, annotations. Treating that record as one indivisible object is often too blunt.

Metaxy takes a narrower, more careful view. Instead of assigning one version to a whole table or even one version to a whole sample, it tracks dependencies at the field level within each record. In practice, that means the system can see that one downstream result depends on audio while another depends only on frames.

The effect is straightforward.

If the audio logic changes, recompute the audio-dependent outputs.

If the frame logic changes, recompute the vision-dependent outputs.

If a branch is unaffected, leave it alone.

This is the meaning of the Metaxy tagline, "perfecting the art of doing nothing." It is not about avoiding work. It is about becoming precise enough to avoid the wrong work. In modern AI pipelines, that precision matters. It saves money, shortens iteration cycles, and removes the hesitation that comes from knowing a minor change might trigger a major rerun across tokens, GPUs, or both.

The Missing Layer Between Orchestration and Execution

Once the idea of “perfecting the art of doing nothing” became explicit, Metaxy emerged as a missing layer between orchestration and execution.

The existing tools were not the enemy. Orchestrators such as Dagster are excellent at structuring work, coordinating dependencies, and making systems observable. Execution frameworks and compute backends are excellent at running heavy workloads across local machines, warehouses, clusters, and GPUs. But there was still a gap between those layers. Orchestrators can define jobs, assets, schedules, and high-level dependencies, and execution systems can run work across warehouses, clusters, and GPUs. But neither layer is designed to decide, with fine precision, which exact records and which exact fields within those records actually need to be recomputed.

That is where Metaxy sits in the stack. It operates after orchestration has defined the logical pipeline but before expensive execution begins. As a metadata and decision layer, it resolves the increment first: what changed, which downstream computations truly depend on those changes, and what can safely be skipped. Instead of asking only whether an upstream asset changed, Metaxy asks which records changed, which fields changed within those records, and which downstream branches are actually affected.

Metaxy fills that gap before the expensive work begins. It resolves the increment first. Rather than sending an entire downstream dataset back through GPU-heavy jobs, it identifies what is new, what is affected, and what can safely be skipped. That sounds like an implementation detail, but operationally it changes the economics of iteration.

Applications in Research and Production Environments

This matters most in research and production environments where people are constantly adjusting feature logic, prompts, models, preprocessing steps, and evaluation criteria. In those settings, a "small" change is rarely small in cost. It may trigger another wave of API spend, another round of embedding generation, another GPU-heavy inference job, or another long-running batch on a shared cluster. Without fine-grained change detection, teams become defensive. They delay improvements, batch ideas together, or accept waste because the alternative feels operationally risky. With a more precise diff, experimentation becomes much cheaper, both financially and mentally.

3 Lessons Learned Developing Metaxy

The first lesson was that reproducibility and speed do not have to be opposing values. Teams often act as if they must choose between rigorous lineage and fast iteration. Metaxy points to a different answer: make lineage more granular, and the trade-off becomes much less severe.

The second lesson was that compute-intensive pipelines need a different operating model from classic BI systems. In many tabular workflows, rerunning a transformation is inconvenient but acceptable. In AI-heavy pipelines, every unnecessary rerun compounds across GPUs, hosted APIs, token-based billing, storage, and queueing delays. The old table-level mental model breaks down quickly.

The third lesson was architectural. Metaxy is most useful as a metadata and decision layer, not as a replacement for everything around it. That separation matters because it lets teams keep the tools they already rely on while gaining much better control over incremental work.

Improving Culture and Outcomes for Engineers and Researchers

Metaxy now supports real multimodal pipelines processing millions of samples. But the underlying benefit is not limited to multimodal video systems. The same logic applies anywhere expensive computation sits downstream of evolving data and code. The concrete outcomes are easy to understand: fewer false positives, less redundant GPU work, fewer repeated token charges, lower costs, faster turnaround, and clearer confidence in what changed and why.

But the more interesting outcome is cultural. When a system becomes more precise, people change how they work with it. Engineers and researchers can make targeted changes without bracing for a full cascade of unnecessary recomputation. That creates more room for exploration, more willingness to improve things incrementally, and better use of limited compute budgets.

So Metaxy is not really about doing less for its own sake. It is about reserving effort for the work that matters and refusing work that does not.

And that is the art of doing nothing.

If you want to improve the use of resources at your organization by doing more with less, get in contact with me for more information.

Sources and further reading

Anam announcement: https://anam.ai/blog/metaxy

Github repo: https://github.com/anam-org/metaxy/